1 min read

How To Choose A Marketing Agency

The right marketing agency has experience in your industry, can show proof of results, and fits your budget. Start by defining what you actually...

Does any of the following sound familiar?

In some cases, all of these situations may sound familiar.

When this type of uncertainty occurs, the suggestion is often to run a test to see what performs better. But what does it really mean to test your marketing?

In this article, we'll explore different ways to test your marketing, the advantages and disadvantages of each, and what you need to know before you test— regardless of your budget size.

However, we're not going to address more involved testing methods such as focus groups or surveys—for more information on those, check out our post on qualitative and quantitative marketing research methods.

Ad testing pairs different elements in your ads to see how they perform.

No matter how much money you throw at your advertising campaign, which influencers you use, or how much money you throw at paid media if your ads do not connect with and influence your target audience, it is a waste.

Your goal in ad testing should be to understand your customers better than your competitors do. What do they engage with, what helps them make a purchase decision, and what makes them leave before converting.

When you have confidence in the effectiveness of your marketing, you can do more with less.

Sometimes referred to as split testing, A/B testing is a valuable method to understand the effectiveness of your marketing.

Through this approach, marketers can identify which version of an ad, email campaign, or landing page leads to more conversions.

The essence of A/B testing is comparing two versions (A and B) to determine which performs better in achieving a set objective. Here's how it works:

Step 1: Define Your Objective:

At the very heart of an A/B test is the hypothesis. Before you start, determine what you're trying to achieve. Outline a clear theory of what you expect to learn. This hypothesis will be rooted in the goal of your test, whether it's increasing clicks, boosting open rates, or improving lead conversions.

Your hypothesis might look like this: "Changing the call-to-action button from green to blue will lead to a higher conversion rate."

A clearly defined hypothesis focuses the test and provides something to measure against.

Step 2: Select Your Variables

The next step is to design your A and B variations.

These two versions should be identical except for the one element you are testing.

If the variable is the color of a button, Version A might have a green button, while Version B showcases a blue one.

The key is to ensure that only one variable is changed. If multiple elements are altered simultaneously (e.g., the hero images are also different), it will be hard to determine which specific change contributed to the results.

Step 3: Split Your Audience

While it might be tempting to roll out the A/B test to your entire audience, selecting a representative sample is best.

Divide your audience into two random but equal groups. One group will only see version A, and the other will only see version B.

Distributing the two versions to a random yet statistically significant portion of your target audience ensures that there aren't any biases in the groups that could skew results.

Various factors, such as your total audience size, expected conversion rates, and the desired confidence level, will determine your sample size.

Step 4: Conduct the Test

With the designs ready and your audience segmented, it's time to run the test.

Run both versions simultaneously to prevent variables like the day of the week or the time of day from affecting the results.

Continue to monitor the test, ensuring no interference or additional changes are made during the testing period.

Running the test long enough to make an informed decision is important. This could range from a few days to several weeks, depending on the nature of the test and the response rate.

Step 5: Analyze the Results

Upon conclusion of the test duration, it's time to analyze the results.

Examine the performance metrics of each version against your original hypothesis.

For instance, if the goal was to improve click-through rates, compare the rates of Version A to Version B.

If one version outperforms the other significantly, and the results are statistically significant, you've gained valuable insight into the preferences and behaviors of your audience.

However, it's vital to approach the results with a critical mind.

While Version B might have outperformed Version A in your test, consider what, if any, other factors that could have influenced the outcome.

Some things that may impact your test outside of your control are seasonality, significant events or promotions, news or PR events, or competitor activity.

Step 6: Implement Learnings and Keep Testing

If one version clearly outperforms the other, consider adopting the winning version for broader use.

If there's no clear winner, take note of the insights gathered and use them to guide future tests.

It's important to realize that A/B testing is an ongoing process. Even after you've found a winning version, there's always room for further optimization.

Continue testing other variables, like images or headlines, or run follow-up tests after a while to confirm and further refine your approach.

A/B testing can offer a more strategic approach to marketing decisions, eliminating guesswork and basing decisions on actual user behavior.

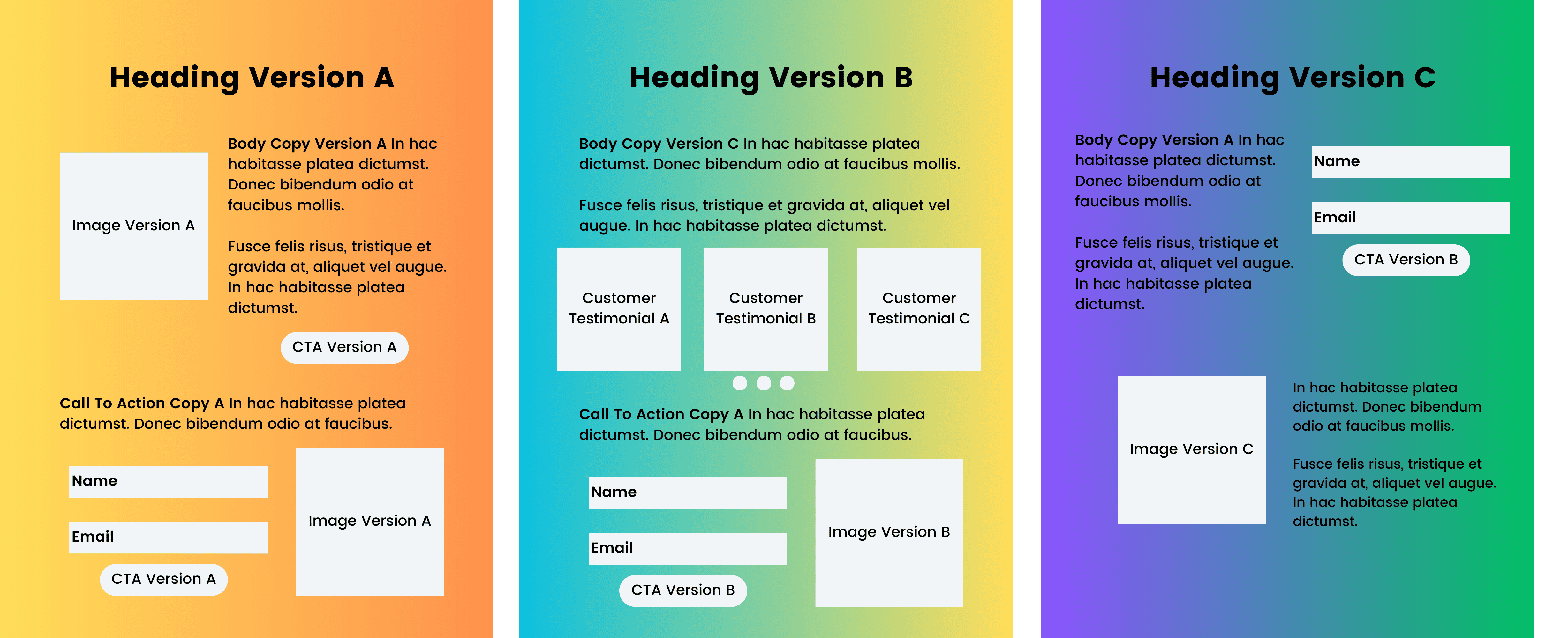

Multivariate testing differs from A/B testing because it involves testing multiple combinations of elements at the same time.

Typically used when testing landing pages, multivariate testing allows you to change several items on a single page so that one version of the page might look radically different from the other.

For example, you may want to test a different headline, header image, and location of the lead form to see how it impacts your conversion rate. You would then divide traffic equally among the different pages in the test.

The drawback, however is if each of the multiple pages you're testing do not get enough page views, you won't have solid data with which to make a decision.

The more page element combinations you test, the more time you'll need to conduct the test.

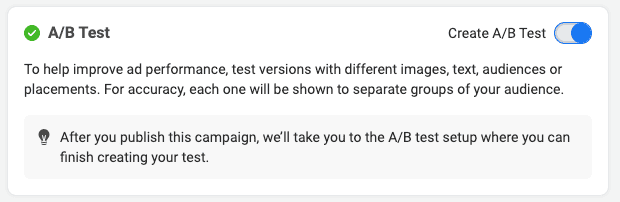

Thankfully, Meta has made testing your Instagram and Facebook ads fairly straightforward within your ad manager.

Creative Testing

Testing your ad creative can include testing different asset types, such as static images, videos, and UGC creatives. It also includes pairing different headlines and descriptions to see what your customers engage with best.

You can perform these tests within the same campaign using Meta's A/B test option or create a separate test campaign.

Whichever you select, try to ensure that the time frame and budget are similar to get an accurate comparison.

Ad Copy Testing

Testing ad copy can include tests such as copy length, emojis use, tone. As with the majority of other tests we'll discuss, try to limit how many elements you're testing at once so as to not spread your data too thin.

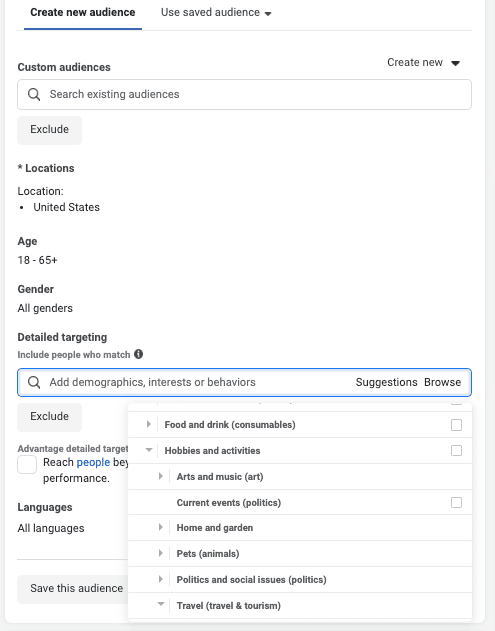

Audience Testing

Testing your audience targeting can prove valuable, especially as Meta and others increase their focus on user privacy.

Since Meta does not share the same audience targeting information they once did, it's important to test new audience approaches to ensure your ads are targeted most effectively.

For example, you may want to test targeting multiple interests in the same ad set instead of selecting a single interest. Or test different interests against each.

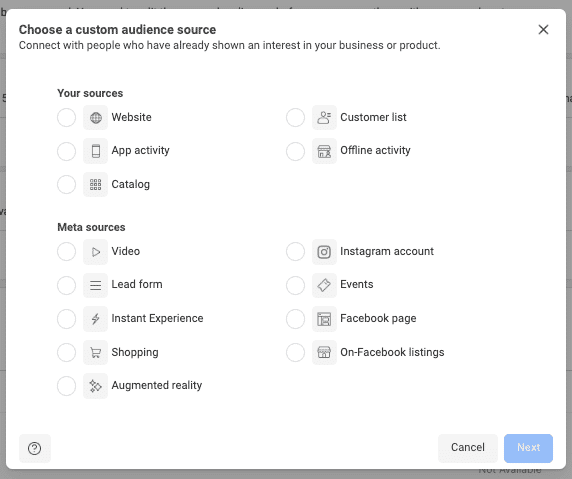

You can also leverage Meta's audience tools to create and test various custom audiences, such as website visitors, engaged audiences, past purchasers, or lookalike audiences.

CTA & Landing Page (& More)

Within the Meta Ad Manager, there are additional tests you can run, such as call-to-action or landing page tests, but if you're just beginning to test your creative or you are not spending a significant amount with Meta, we recommend you focus on one or two initial tests and build from there.

It's better to uncover a few insights and make continued improvements than to try and boil the ocean at once.

Like Meta, there are many several tests that you can perform in Google Ads.

Testing Bidding Strategies

Testing your bidding strategies in Google Ads is an key practice when optimize your ROI.

This process involves experimenting with different bidding options, such as cost-per-click (CPC), cost-per-acquisition (CPA), or automated strategies like Target CPA and Target ROAS, to identify which one effectively aligns with your campaign goals—be it driving website traffic, increasing conversions, or maximizing revenue.

Conducting A/B tests by running campaigns concurrently with varied bidding strategies can help you gather comparative data and insights into the impact of each strategy.

Testing Keywords

This involves identifying and evaluating various keywords to determine which ones most effectively drive traffic and conversions among your target audience.

To avoid your campaigns becoming overwhelmed, we recommend employing a systematic approach that begins with keyword research to generate a list of potential search terms, then separating them in different ad groups and campaigns to gather performance data.

When your keywords eat up a significant portion of your budget lower your bid or pause the keyword entirely.

If you believe the keyword should be performing better, consider testing your ad copy in a separate ad group or campaign.

Testing Ad Copy

Testing ad copy by running different versions of an ad's headlines, descriptions, call-to-actions, and even display URLs can help improve your conversion rates.

To test your ad copy, run two or more versions of your ad simultaneously, under similar conditions, to compare their effectiveness.

As with all the tests we've described, it's important that your ad copy test be conducted with substantial traffic and over an appropriate period to reduce ensure its reliability. This may mean that you will want to turn off the optimized ad rotation feature in your campaign settings.

Consider the relevance of your ad copy to the corresponding keywords and landing pages as well. Testing your ad copy can significantly improve the performance of your Google Ads campaigns, leading to more conversions and qualified leads.

Landing Page Tests

While not part of the ad, the landing page plays an important role and should also be considered for testing.

There are two approaches to testing your landing page. The first is through a multivariate test, which we've already detailed above.

The second is by changing the final URL of your ads to different pages. In running the test this way, you can send traffic from the same ad to different pages to see if that impacts the conversion rate.

We may hate to admit it, but most people—and companies—are risk averse. It's hard to say "yes" to a marketing concept or idea that is unique—especially when you have a limited budget.

Implementing a culture of testing around your marketing not only helps you better understand your customers and their journey, but it allows you a framework for pushing the envelope and trying new things in your marketing through short-term risks.

Ultimately, testing and optimizing your marketing can play a significant role in the success of your marketing long-term. Knowing how to test your ads and landing pages effectively can save you time, money and make you a better marketer.

Sign up for our monthly newsletter to receive updates.

1 min read

The right marketing agency has experience in your industry, can show proof of results, and fits your budget. Start by defining what you actually...

1 min read

What is Generative Engine Optimization? Generative engine optimization (GEO) is the practice of making your brand visible in AI-generated answers....

1 min read

I don't believe I'm alone in saying that each year seems to fly by, and before you know it, we, as marketers, are back in the planning phase,...